Blog Post

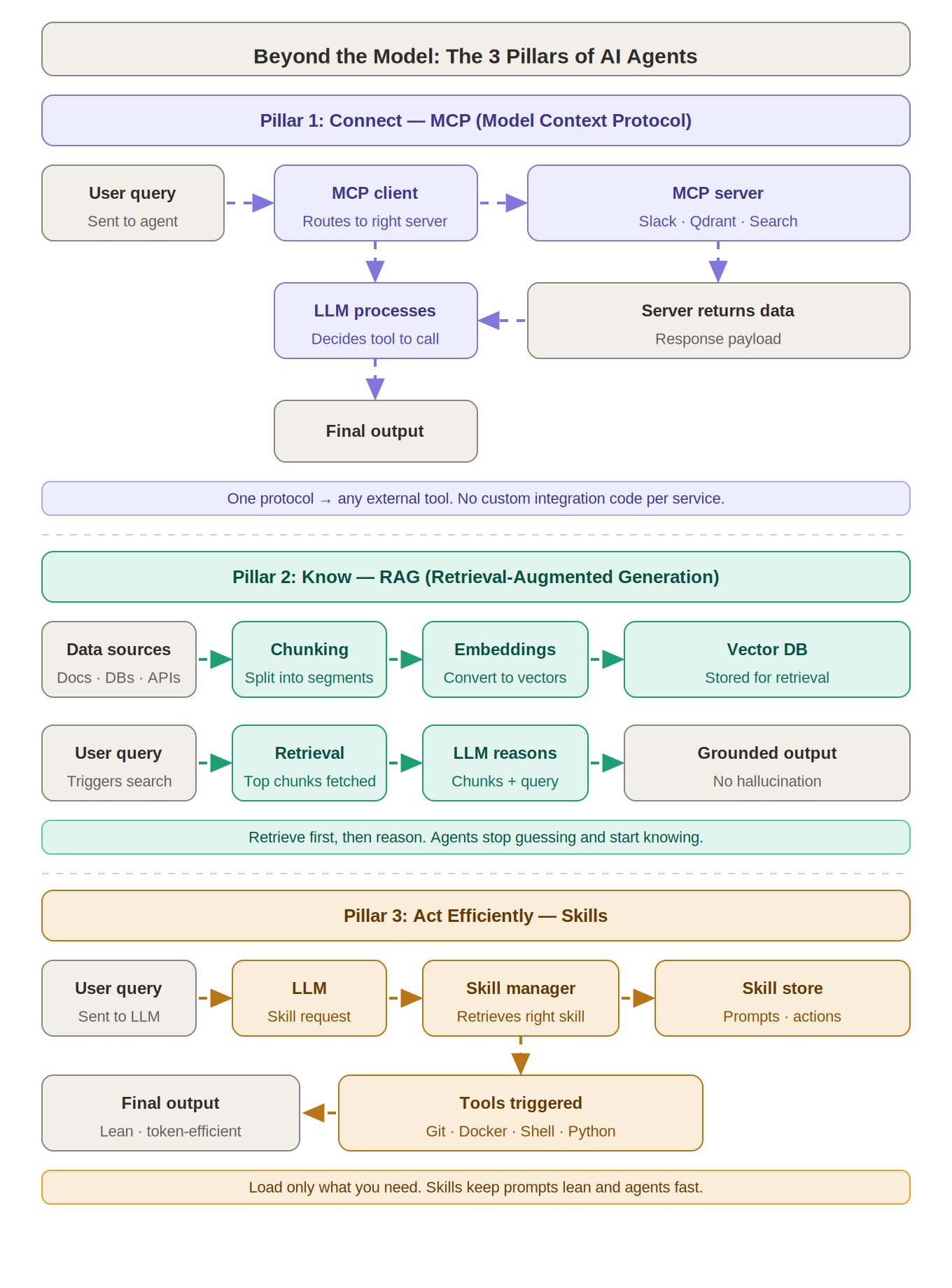

Beyond the Model: The 3 Pillars of AI Agents

Most teams building AI agents start by focusing on things like the model choice, the UI, or the deployment pipeline.

Those are important eventually, but often the real foundations of a production-grade agent get added late, or not at all.

I want to talk about the three core pillars of robust AI agents and the purpose-built tech that supports each one. Nail these, and you will have an agent that can truly scale and deliver.

Pillar 1: Connect - MCP (Model Context Protocol)

Every serious AI agent will need to plug into external tools and data sources, including databases, APIs, search engines, messaging platforms, and more.

If you custom-code every new integration from scratch, it becomes brittle and hard to maintain. Things break whenever an API changes.

Model Context Protocol, or MCP, is an open standard that tackles this by giving your agent one unified way to connect to external services. Instead of writing bespoke connectors each time, your agent talks to an MCP server, and that server handles the tool-specific calls.

How it works

Query -> MCP client -> appropriate MCP server -> data is fetched -> agent responds

In short, with MCP your agent only needs to speak one language to interact with everything.

Pillar 2: Know - RAG (Retrieval-Augmented Generation)

Out of the box, even the best LLM has knowledge limits. There is a cutoff to its training data, and it will not know anything about your latest internal documents or up-to-the-minute facts. Ask it anyway, and it may confidently make something up.

Retrieval-Augmented Generation, or RAG, fixes that by giving the agent real-time access to external knowledge before it answers. The agent retrieves relevant information first, then reasons with it.

In practice, you chunk your data, such as files or wiki pages, embed those chunks into vector search, and store them. When a query comes in, the agent finds the most relevant pieces and feeds them into the prompt alongside the question.

How it works

Query -> retrieve top relevant chunks -> LLM reads those chunks -> grounded answer

With RAG, your agent is answering with actual evidence from your data, drastically cutting down on guesswork and hallucination.

Pillar 3: Act Efficiently - Skills

If an agent can take actions, whether that means running code, calling an API, or manipulating a tool, it needs to do so efficiently.

Without a skill system, you would end up stuffing the same lengthy instructions into every prompt to enable those actions. That wastes tokens and time.

A modular skills approach introduces self-contained abilities or routines the agent can load only when needed. Instead of carrying an instruction for every possible tool all the time, the agent uses a skill manager to pull in the right skill, meaning a pre-defined prompt or action blueprint, on demand.

That means it will only invoke tools like Git, Docker, shell scripts, or Python when and if the task truly requires it.

How it works

Query -> LLM processes request -> skill manager fetches the needed skill -> specific tool or action is executed -> result returned to LLM -> agent outputs final answer

With a skills-based approach, your agent stays lean, focusing on general reasoning until a concrete action is required, then efficiently executing just that piece. No superfluous instructions. No repetitive overhead.

The big picture

- MCP gives your agent a universal connector to integrate with anything.

- RAG ensures your agent can find the knowledge it needs on the fly.

- Skills make your agent execute tasks efficiently without bloating every prompt.

These are not nice-to-have extras. They form the foundation for any AI agent that is going to thrive in production.

As this space matures, we are seeing that agents built on these pillars are more scalable, accurate, and adaptable than those that are not.